Stream TTS in real time

Qwen Cloud provides two families of real-time speech synthesis models: CosyVoice for streaming synthesis with SSML control, and Qwen-TTS-Realtime for real-time synthesis with instruction-based voice control, voice cloning, and voice design.

For more details, see Model comparison.

For complete code examples, see Getting started.

Core features

- Generates high-fidelity speech in real time with natural pronunciation in multiple languages, such as Chinese and English

- Supports voice customization through Qwen-TTS-Realtime voice cloning and voice design

- Supports streaming input and output with low-latency responses for real-time interactive scenarios

- Adjustable speech rate, pitch, volume, and bitrate for fine-grained control over vocal expression

- Compatible with mainstream audio formats, supporting output up to 48 kHz sample rate

- Supports instruction control, enabling natural language instructions to control vocal expressiveness

Availability

- CosyVoice

- Qwen-TTS-Realtime

Supported models:When you invoke the following models, use an API key.

- CosyVoice: cosyvoice-v3-plus, cosyvoice-v3-flash

Supported models:Use an API Key when calling the following models:

- Qwen3-TTS-Instruct-Flash-Realtime: qwen3-tts-instruct-flash-realtime (stable version, equivalent to qwen3-tts-instruct-flash-realtime-2026-01-22), qwen3-tts-instruct-flash-realtime-2026-01-22 (latest snapshot)

- Qwen3-TTS-VD-Realtime: qwen3-tts-vd-realtime-2026-01-15 (latest snapshot), qwen3-tts-vd-realtime-2025-12-16 (snapshot)

- Qwen3-TTS-VC-Realtime: qwen3-tts-vc-realtime-2026-01-15 (latest snapshot), qwen3-tts-vc-realtime-2025-11-27 (snapshot)

- Qwen3-TTS-Flash-Realtime: qwen3-tts-flash-realtime (stable version, equivalent to qwen3-tts-flash-realtime-2025-11-27), qwen3-tts-flash-realtime-2025-11-27 (latest snapshot), qwen3-tts-flash-realtime-2025-09-18 (snapshot)

Model selection

- CosyVoice

- Qwen-TTS-Realtime

| Scenario | Recommended | Reason | Notes |

|---|---|---|---|

| Intelligent customer service / Voice assistant | cosyvoice-v3-flash | Lower cost than plus models with support for streaming interaction and emotional expression, delivering fast responses at an affordable price point. | |

| Educational applications (including formula reading) | cosyvoice-v3-flash, cosyvoice-v3-plus | Supports LaTeX formula-to-speech conversion, ideal for mathematics, physics, and chemistry instruction. | cosyvoice-v3-plus has higher costs ($0.286706 per 10,000 characters). |

| Structured voice broadcasting (news/announcements) | cosyvoice-v3-plus, cosyvoice-v3-flash | Supports SSML for controlling speech rate, pauses, and pronunciation to enhance broadcast professionalism. | Implement the SSML generation logic independently. This model does not support emotion settings. |

| Precise speech-text alignment for scenarios such as caption generation, lesson playback, and dictation practice | cosyvoice-v3-flash, cosyvoice-v3-plus | Supports timestamp output to synchronize the synthesized speech with the original text. | Manually enable the timestamp feature. |

| Multilingual international products | cosyvoice-v3-flash, cosyvoice-v3-plus | Supports multiple languages. |

| Scenario | Recommended model | Reason |

|---|---|---|

| Voice customization for brand identity, exclusive voices, or extended system voices (based on text descriptions) | qwen3-tts-vd-realtime-2026-01-15 | Supports voice design. Creates customized voices from text descriptions without audio samples. Ideal for designing brand-exclusive voices from scratch. |

| Voice customization for brand identity, exclusive voices, or extended system voices (based on audio samples) | qwen3-tts-vc-realtime-2026-01-15 | Supports voice cloning. Quickly replicates voices from real audio samples to create lifelike brand voiceprints with high fidelity and consistency. |

| Emotional content production (audiobooks, radio dramas, game/animation dubbing) | qwen3-tts-instruct-flash-realtime | Supports instruction control. Precisely controls tone, speed, emotion, and character personality through natural language descriptions. Ideal for scenarios requiring rich expressiveness and character development. |

| Professional broadcasting (news, documentaries, advertising) | qwen3-tts-instruct-flash-realtime | Supports instruction control. Describes broadcasting styles and tonal characteristics (such as "authoritative and solemn" or "casual and friendly"). Suitable for professional content production. |

| Intelligent customer service and conversational bots | qwen3-tts-flash-realtime, qwen3-tts-instruct-flash-realtime | Supports streaming input and output with adjustable speech rate and pitch. The instruct version supports instruction control to dynamically adjust tone (such as reassuring, enthusiastic, or professional) based on conversation context. |

| Multilingual content broadcasting | qwen3-tts-flash-realtime, qwen3-tts-instruct-flash-realtime | Supports multiple languages and Chinese dialects, meeting global content distribution needs. |

| Audiobook reading and general content production | qwen3-tts-flash-realtime, qwen3-tts-instruct-flash-realtime | Adjustable volume, speech rate, and pitch to meet fine-grained production requirements for audiobooks, podcasts, and similar content. |

| E-commerce livestreaming and short video dubbing | qwen3-tts-flash-realtime, qwen3-tts-instruct-flash-realtime | Supports mp3/opus compressed formats, suitable for bandwidth-constrained scenarios. |

Getting started

- CosyVoice

- Qwen-TTS-Realtime

For more code examples, see GitHub.Get an API key and set it as an environment variable. To use the SDK, install it.

- Use system voices

Save synthesized audio to a file

For available voices, see the Voice list.- Python

- Java

Copy

# coding=utf-8

import os

import dashscope

from dashscope.audio.tts_v2 import *

# If you have not configured environment variables, replace the following line with your API key: dashscope.api_key = "sk-xxx"

dashscope.api_key = os.environ.get('DASHSCOPE_API_KEY')

dashscope.base_websocket_api_url='wss://dashscope-intl.aliyuncs.com/api-ws/v1/inference'

# Model

# cosyvoice-v3-flash/cosyvoice-v3-plus: Use voices such as longanyang.

# Each voice supports different languages. When synthesizing non-Chinese languages such as Japanese or Korean, select a voice that supports the corresponding language. For more information, see the CosyVoice voice list.

model = "cosyvoice-v3-flash"

# Voice

voice = "longanyang"

# Instantiate SpeechSynthesizer and pass request parameters such as model and voice in the constructor.

synthesizer = SpeechSynthesizer(model=model, voice=voice)

# Send the text to be synthesized and get the binary audio.

audio = synthesizer.call("How is the weather today?")

# The first time you send text, a WebSocket connection is established. The first packet delay includes the connection establishment time.

print('[Metric] Request ID: {}, First packet delay: {} ms'.format(

synthesizer.get_last_request_id(),

synthesizer.get_first_package_delay()))

# Save the audio locally.

with open('output.mp3', 'wb') as f:

f.write(audio)

Copy

import com.alibaba.dashscope.audio.ttsv2.SpeechSynthesisParam;

import com.alibaba.dashscope.audio.ttsv2.SpeechSynthesizer;

import com.alibaba.dashscope.utils.Constants;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.nio.ByteBuffer;

public class Main {

// Model

// cosyvoice-v3-flash/cosyvoice-v3-plus: Use voices such as longanyang.

// Each voice supports different languages. When synthesizing non-Chinese languages such as Japanese or Korean, select a voice that supports the corresponding language. For more information, see the CosyVoice voice list.

private static String model = "cosyvoice-v3-flash";

// Voice

private static String voice = "longanyang";

public static void streamAudioDataToSpeaker() {

// Request parameters

SpeechSynthesisParam param =

SpeechSynthesisParam.builder()

// If you have not configured environment variables, replace the following line with your API key: .apiKey("sk-xxx")

.apiKey(System.getenv("DASHSCOPE_API_KEY"))

.model(model) // Model

.voice(voice) // Voice

.build();

// Synchronous mode: Disable callback (second parameter is null).

SpeechSynthesizer synthesizer = new SpeechSynthesizer(param, null);

ByteBuffer audio = null;

try {

// Block until audio returns.

audio = synthesizer.call("How is the weather today?");

} catch (Exception e) {

throw new RuntimeException(e);

} finally {

// Close the WebSocket connection when the task ends.

synthesizer.getDuplexApi().close(1000, "bye");

}

if (audio != null) {

// Save the audio data to the local file "output.mp3".

File file = new File("output.mp3");

// The first time you send text, a WebSocket connection is established. The first packet delay includes the connection establishment time.

System.out.println(

"[Metric] Request ID: "

+ synthesizer.getLastRequestId()

+ ", First packet delay (ms): "

+ synthesizer.getFirstPackageDelay());

try (FileOutputStream fos = new FileOutputStream(file)) {

fos.write(audio.array());

} catch (IOException e) {

throw new RuntimeException(e);

}

}

}

public static void main(String[] args) {

Constants.baseWebsocketApiUrl = "wss://dashscope-intl.aliyuncs.com/api-ws/v1/inference";

streamAudioDataToSpeaker();

System.exit(0);

}

}

Convert LLM-generated text to speech in real time and play it through speakers

Play text from a Qwen model (qwen3.5-flash) as speech in real time on a local device.- Python

- Java

Before you run the Python example, install a third-party audio playback library using pip.

Copy

# coding=utf-8

# Installation instructions for pyaudio:

# APPLE Mac OS X

# brew install portaudio

# pip install pyaudio

# Debian/Ubuntu

# sudo apt-get install python-pyaudio python3-pyaudio

# or

# pip install pyaudio

# CentOS

# sudo yum install -y portaudio portaudio-devel && pip install pyaudio

# Microsoft Windows

# python -m pip install pyaudio

import os

import pyaudio

import dashscope

from dashscope.audio.tts_v2 import *

from http import HTTPStatus

from dashscope import Generation

# If you have not configured environment variables, replace the following line with your API key: dashscope.api_key = "sk-xxx"

dashscope.api_key = os.environ.get('DASHSCOPE_API_KEY')

dashscope.base_websocket_api_url='wss://dashscope-intl.aliyuncs.com/api-ws/v1/inference'

# cosyvoice-v3-flash/cosyvoice-v3-plus: Use voices such as longanyang.

# Each voice supports different languages. When synthesizing non-Chinese languages such as Japanese or Korean, select a voice that supports the corresponding language. For more information, see the CosyVoice voice list.

model = "cosyvoice-v3-flash"

voice = "longanyang"

class Callback(ResultCallback):

_player = None

_stream = None

def on_open(self):

print("websocket is open.")

self._player = pyaudio.PyAudio()

self._stream = self._player.open(

format=pyaudio.paInt16, channels=1, rate=22050, output=True

)

def on_complete(self):

print("speech synthesis task complete successfully.")

def on_error(self, message: str):

print(f"speech synthesis task failed, {message}")

def on_close(self):

print("websocket is closed.")

# stop player

self._stream.stop_stream()

self._stream.close()

self._player.terminate()

def on_event(self, message):

print(f"recv speech synthsis message {message}")

def on_data(self, data: bytes) -> None:

print("audio result length:", len(data))

self._stream.write(data)

def synthesizer_with_llm():

callback = Callback()

synthesizer = SpeechSynthesizer(

model=model,

voice=voice,

format=AudioFormat.PCM_22050HZ_MONO_16BIT,

callback=callback,

)

messages = [{"role": "user", "content": "Please introduce yourself"}]

responses = Generation.call(

model="qwen3.5-flash",

messages=messages,

result_format="message", # set result format as 'message'

stream=True, # enable stream output

incremental_output=True, # enable incremental output

)

for response in responses:

if response.status_code == HTTPStatus.OK:

print(response.output.choices[0]["message"]["content"], end="")

synthesizer.streaming_call(response.output.choices[0]["message"]["content"])

else:

print(

"Request id: %s, Status code: %s, error code: %s, error message: %s"

% (

response.request_id,

response.status_code,

response.code,

response.message,

)

)

synthesizer.streaming_complete()

print('requestId: ', synthesizer.get_last_request_id())

if __name__ == "__main__":

synthesizer_with_llm()

Get an API key and install the SDK before running the code.For more example code, see GitHub.

- Use system voice

- Use cloned voice

- Use designed voice

See Supported voices for available voices.Replace the

model parameter with qwen3-tts-instruct-flash-realtime and set instructions using the instructions parameter to use the instruction control feature.- DashScope SDK

- WebSocket API

- Python

- Java

Server commit mode:Commit mode:

Copy

import os

import base64

import threading

import time

import dashscope

from dashscope.audio.qwen_tts_realtime import *

qwen_tts_realtime: QwenTtsRealtime = None

text_to_synthesize = [

'Right? I love supermarkets like this.',

'Especially during Chinese New Year,',

'I go shopping at supermarkets.',

'And I feel',

'absolutely thrilled!',

'I want to buy so many things!'

]

DO_VIDEO_TEST = False

def init_dashscope_api_key():

"""

Set your DashScope API key. More information:

https://github.com/aliyun/alibabacloud-bailian-speech-demo/blob/master/PREREQUISITES.md

"""

if 'DASHSCOPE_API_KEY' in os.environ:

dashscope.api_key = os.environ[

'DASHSCOPE_API_KEY'] # Load API key from environment variable DASHSCOPE_API_KEY

else:

dashscope.api_key = 'your-dashscope-api-key' # Set API key manually

class MyCallback(QwenTtsRealtimeCallback):

def __init__(self):

self.complete_event = threading.Event()

self.file = open('result_24k.pcm', 'wb')

def on_open(self) -> None:

print('connection opened, init player')

def on_close(self, close_status_code, close_msg) -> None:

self.file.close()

print('connection closed with code: {}, msg: {}, destroy player'.format(close_status_code, close_msg))

def on_event(self, response: str) -> None:

try:

global qwen_tts_realtime

type = response['type']

if 'session.created' == type:

print('start session: {}'.format(response['session']['id']))

if 'response.audio.delta' == type:

recv_audio_b64 = response['delta']

self.file.write(base64.b64decode(recv_audio_b64))

if 'response.done' == type:

print(f'response {qwen_tts_realtime.get_last_response_id()} done')

if 'session.finished' == type:

print('session finished')

self.complete_event.set()

except Exception as e:

print('[Error] {}'.format(e))

return

def wait_for_finished(self):

self.complete_event.wait()

if __name__ == '__main__':

init_dashscope_api_key()

print('Initializing ...')

callback = MyCallback()

qwen_tts_realtime = QwenTtsRealtime(

# To use instruction control, replace the model with qwen3-tts-instruct-flash-realtime

model='qwen3-tts-flash-realtime',

callback=callback,

url='wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime'

)

qwen_tts_realtime.connect()

qwen_tts_realtime.update_session(

voice = 'Cherry',

response_format = AudioFormat.PCM_24000HZ_MONO_16BIT,

# To use instruction control, uncomment the following lines and replace the model with qwen3-tts-instruct-flash-realtime

# instructions='Speak quickly with a rising intonation, suitable for introducing fashion products.',

# optimize_instructions=True,

mode = 'server_commit'

)

for text_chunk in text_to_synthesize:

print(f'send text: {text_chunk}')

qwen_tts_realtime.append_text(text_chunk)

time.sleep(0.1)

qwen_tts_realtime.finish()

callback.wait_for_finished()

print('[Metric] session: {}, first audio delay: {}'.format(

qwen_tts_realtime.get_session_id(),

qwen_tts_realtime.get_first_audio_delay(),

))

Copy

import base64

import os

import threading

import dashscope

from dashscope.audio.qwen_tts_realtime import *

qwen_tts_realtime: QwenTtsRealtime = None

text_to_synthesize = [

'This is the first sentence.',

'This is the second sentence.',

'This is the third sentence.',

]

DO_VIDEO_TEST = False

def init_dashscope_api_key():

"""

Set your DashScope API key. More information:

https://github.com/aliyun/alibabacloud-bailian-speech-demo/blob/master/PREREQUISITES.md

"""

if 'DASHSCOPE_API_KEY' in os.environ:

dashscope.api_key = os.environ[

'DASHSCOPE_API_KEY'] # Load API key from environment variable DASHSCOPE_API_KEY

else:

dashscope.api_key = 'your-dashscope-api-key' # Set API key manually

class MyCallback(QwenTtsRealtimeCallback):

def __init__(self):

super().__init__()

self.response_counter = 0

self.complete_event = threading.Event()

self.file = open(f'result_{self.response_counter}_24k.pcm', 'wb')

def reset_event(self):

self.response_counter += 1

self.file = open(f'result_{self.response_counter}_24k.pcm', 'wb')

self.complete_event = threading.Event()

def on_open(self) -> None:

print('connection opened, init player')

def on_close(self, close_status_code, close_msg) -> None:

print('connection closed with code: {}, msg: {}, destroy player'.format(close_status_code, close_msg))

def on_event(self, response: str) -> None:

try:

global qwen_tts_realtime

type = response['type']

if 'session.created' == type:

print('start session: {}'.format(response['session']['id']))

if 'response.audio.delta' == type:

recv_audio_b64 = response['delta']

self.file.write(base64.b64decode(recv_audio_b64))

if 'response.done' == type:

print(f'response {qwen_tts_realtime.get_last_response_id()} done')

self.complete_event.set()

self.file.close()

if 'session.finished' == type:

print('session finished')

self.complete_event.set()

except Exception as e:

print('[Error] {}'.format(e))

return

def wait_for_response_done(self):

self.complete_event.wait()

if __name__ == '__main__':

init_dashscope_api_key()

print('Initializing ...')

callback = MyCallback()

qwen_tts_realtime = QwenTtsRealtime(

# To use instruction control, replace the model with qwen3-tts-instruct-flash-realtime

model='qwen3-tts-flash-realtime',

callback=callback,

url='wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime'

)

qwen_tts_realtime.connect()

qwen_tts_realtime.update_session(

voice = 'Cherry',

response_format = AudioFormat.PCM_24000HZ_MONO_16BIT,

# To use instruction control, uncomment the following lines and replace the model with qwen3-tts-instruct-flash-realtime

# instructions='Speak quickly with a rising intonation, suitable for introducing fashion products.',

# optimize_instructions=True,

mode = 'commit'

)

print(f'send text: {text_to_synthesize[0]}')

qwen_tts_realtime.append_text(text_to_synthesize[0])

qwen_tts_realtime.commit()

callback.wait_for_response_done()

callback.reset_event()

print(f'send text: {text_to_synthesize[1]}')

qwen_tts_realtime.append_text(text_to_synthesize[1])

qwen_tts_realtime.commit()

callback.wait_for_response_done()

callback.reset_event()

print(f'send text: {text_to_synthesize[2]}')

qwen_tts_realtime.append_text(text_to_synthesize[2])

qwen_tts_realtime.commit()

callback.wait_for_response_done()

qwen_tts_realtime.finish()

print('[Metric] session: {}, first audio delay: {}'.format(

qwen_tts_realtime.get_session_id(),

qwen_tts_realtime.get_first_audio_delay(),

))

Server commit mode:Commit mode:

Copy

import com.alibaba.dashscope.audio.qwen_tts_realtime.*;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.google.gson.JsonObject;

import javax.sound.sampled.LineUnavailableException;

import javax.sound.sampled.SourceDataLine;

import javax.sound.sampled.AudioFormat;

import javax.sound.sampled.DataLine;

import javax.sound.sampled.AudioSystem;

import java.io.FileNotFoundException;

import java.io.IOException;

import java.util.Base64;

import java.util.Queue;

import java.util.concurrent.CountDownLatch;

import java.util.concurrent.atomic.AtomicReference;

import java.util.concurrent.ConcurrentLinkedQueue;

import java.util.concurrent.atomic.AtomicBoolean;

public class Main {

static String[] textToSynthesize = {

"Right? I just really love this kind of supermarket",

"Especially during the New Year",

"Going to the supermarket",

"Makes me feel",

"Super, super happy!",

"I want to buy so many things!"

};

// Real-time PCM audio player class

public static class RealtimePcmPlayer {

private int sampleRate;

private SourceDataLine line;

private AudioFormat audioFormat;

private Thread decoderThread;

private Thread playerThread;

private AtomicBoolean stopped = new AtomicBoolean(false);

private Queue<String> b64AudioBuffer = new ConcurrentLinkedQueue<>();

private Queue<byte[]> RawAudioBuffer = new ConcurrentLinkedQueue<>();

// The constructor initializes the audio format and audio line.

public RealtimePcmPlayer(int sampleRate) throws LineUnavailableException {

this.sampleRate = sampleRate;

this.audioFormat = new AudioFormat(this.sampleRate, 16, 1, true, false);

DataLine.Info info = new DataLine.Info(SourceDataLine.class, audioFormat);

line = (SourceDataLine) AudioSystem.getLine(info);

line.open(audioFormat);

line.start();

decoderThread = new Thread(new Runnable() {

@Override

public void run() {

while (!stopped.get()) {

String b64Audio = b64AudioBuffer.poll();

if (b64Audio != null) {

byte[] rawAudio = Base64.getDecoder().decode(b64Audio);

RawAudioBuffer.add(rawAudio);

} else {

try {

Thread.sleep(100);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}

}

});

playerThread = new Thread(new Runnable() {

@Override

public void run() {

while (!stopped.get()) {

byte[] rawAudio = RawAudioBuffer.poll();

if (rawAudio != null) {

try {

playChunk(rawAudio);

} catch (IOException e) {

throw new RuntimeException(e);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

} else {

try {

Thread.sleep(100);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}

}

});

decoderThread.start();

playerThread.start();

}

// Plays an audio chunk and blocks until playback is complete.

private void playChunk(byte[] chunk) throws IOException, InterruptedException {

if (chunk == null || chunk.length == 0) return;

int bytesWritten = 0;

while (bytesWritten < chunk.length) {

bytesWritten += line.write(chunk, bytesWritten, chunk.length - bytesWritten);

}

int audioLength = chunk.length / (this.sampleRate*2/1000);

// Waits for the audio in the buffer to finish playing.

Thread.sleep(audioLength - 10);

}

public void write(String b64Audio) {

b64AudioBuffer.add(b64Audio);

}

public void cancel() {

b64AudioBuffer.clear();

RawAudioBuffer.clear();

}

public void waitForComplete() throws InterruptedException {

while (!b64AudioBuffer.isEmpty() || !RawAudioBuffer.isEmpty()) {

Thread.sleep(100);

}

line.drain();

}

public void shutdown() throws InterruptedException {

stopped.set(true);

decoderThread.join();

playerThread.join();

if (line != null && line.isRunning()) {

line.drain();

line.close();

}

}

}

public static void main(String[] args) throws InterruptedException, LineUnavailableException, FileNotFoundException {

QwenTtsRealtimeParam param = QwenTtsRealtimeParam.builder()

// To use the instruction control feature, replace the model with qwen3-tts-instruct-flash-realtime.

.model("qwen3-tts-flash-realtime")

.url("wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime")

.apikey(System.getenv("DASHSCOPE_API_KEY"))

.build();

AtomicReference<CountDownLatch> completeLatch = new AtomicReference<>(new CountDownLatch(1));

final AtomicReference<QwenTtsRealtime> qwenTtsRef = new AtomicReference<>(null);

// Creates a real-time audio player instance.

RealtimePcmPlayer audioPlayer = new RealtimePcmPlayer(24000);

QwenTtsRealtime qwenTtsRealtime = new QwenTtsRealtime(param, new QwenTtsRealtimeCallback() {

@Override

public void onOpen() {

// Handles the event when the connection is established.

}

@Override

public void onEvent(JsonObject message) {

String type = message.get("type").getAsString();

switch(type) {

case "session.created":

// Handles the event when the session is created.

break;

case "response.audio.delta":

String recvAudioB64 = message.get("delta").getAsString();

// Plays the audio in real time.

audioPlayer.write(recvAudioB64);

break;

case "response.done":

// Handles the event when the response is complete.

break;

case "session.finished":

// Handles the event when the session is finished.

completeLatch.get().countDown();

default:

break;

}

}

@Override

public void onClose(int code, String reason) {

// Handles the event when the connection is closed.

}

});

qwenTtsRef.set(qwenTtsRealtime);

try {

qwenTtsRealtime.connect();

} catch (NoApiKeyException e) {

throw new RuntimeException(e);

}

QwenTtsRealtimeConfig config = QwenTtsRealtimeConfig.builder()

.voice("Cherry")

.responseFormat(QwenTtsRealtimeAudioFormat.PCM_24000HZ_MONO_16BIT)

.mode("server_commit")

// To use the instruction control feature, uncomment the following lines and replace the model with qwen3-tts-instruct-flash-realtime.

// .instructions("")

// .optimizeInstructions(true)

.build();

qwenTtsRealtime.updateSession(config);

for (String text:textToSynthesize) {

qwenTtsRealtime.appendText(text);

Thread.sleep(100);

}

qwenTtsRealtime.finish();

completeLatch.get().await();

qwenTtsRealtime.close();

// Waits for audio playback to complete and then shuts down the player.

audioPlayer.waitForComplete();

audioPlayer.shutdown();

System.exit(0);

}

}

Copy

import com.alibaba.dashscope.audio.qwen_tts_realtime.*;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.google.gson.JsonObject;

import javax.sound.sampled.LineUnavailableException;

import javax.sound.sampled.SourceDataLine;

import javax.sound.sampled.AudioFormat;

import javax.sound.sampled.DataLine;

import javax.sound.sampled.AudioSystem;

import java.io.File;

import java.io.FileNotFoundException;

import java.io.FileOutputStream;

import java.io.IOException;

import java.util.Base64;

import java.util.Queue;

import java.util.Scanner;

import java.util.concurrent.CountDownLatch;

import java.util.concurrent.atomic.AtomicReference;

import java.util.concurrent.ConcurrentLinkedQueue;

import java.util.concurrent.atomic.AtomicBoolean;

public class commit {

// Real-time PCM audio player class

public static class RealtimePcmPlayer {

private int sampleRate;

private SourceDataLine line;

private AudioFormat audioFormat;

private Thread decoderThread;

private Thread playerThread;

private AtomicBoolean stopped = new AtomicBoolean(false);

private Queue<String> b64AudioBuffer = new ConcurrentLinkedQueue<>();

private Queue<byte[]> RawAudioBuffer = new ConcurrentLinkedQueue<>();

// The constructor initializes the audio format and audio line.

public RealtimePcmPlayer(int sampleRate) throws LineUnavailableException {

this.sampleRate = sampleRate;

this.audioFormat = new AudioFormat(this.sampleRate, 16, 1, true, false);

DataLine.Info info = new DataLine.Info(SourceDataLine.class, audioFormat);

line = (SourceDataLine) AudioSystem.getLine(info);

line.open(audioFormat);

line.start();

decoderThread = new Thread(new Runnable() {

@Override

public void run() {

while (!stopped.get()) {

String b64Audio = b64AudioBuffer.poll();

if (b64Audio != null) {

byte[] rawAudio = Base64.getDecoder().decode(b64Audio);

RawAudioBuffer.add(rawAudio);

} else {

try {

Thread.sleep(100);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}

}

});

playerThread = new Thread(new Runnable() {

@Override

public void run() {

while (!stopped.get()) {

byte[] rawAudio = RawAudioBuffer.poll();

if (rawAudio != null) {

try {

playChunk(rawAudio);

} catch (IOException e) {

throw new RuntimeException(e);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

} else {

try {

Thread.sleep(100);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}

}

});

decoderThread.start();

playerThread.start();

}

// Plays an audio chunk and blocks until playback is complete.

private void playChunk(byte[] chunk) throws IOException, InterruptedException {

if (chunk == null || chunk.length == 0) return;

int bytesWritten = 0;

while (bytesWritten < chunk.length) {

bytesWritten += line.write(chunk, bytesWritten, chunk.length - bytesWritten);

}

int audioLength = chunk.length / (this.sampleRate*2/1000);

// Waits for the audio in the buffer to finish playing.

Thread.sleep(audioLength - 10);

}

public void write(String b64Audio) {

b64AudioBuffer.add(b64Audio);

}

public void cancel() {

b64AudioBuffer.clear();

RawAudioBuffer.clear();

}

public void waitForComplete() throws InterruptedException {

// Waits for all audio data in the buffers to finish playing.

while (!b64AudioBuffer.isEmpty() || !RawAudioBuffer.isEmpty()) {

Thread.sleep(100);

}

// Waits for the audio line to finish playing.

line.drain();

}

public void shutdown() throws InterruptedException {

stopped.set(true);

decoderThread.join();

playerThread.join();

if (line != null && line.isRunning()) {

line.drain();

line.close();

}

}

}

public static void main(String[] args) throws InterruptedException, LineUnavailableException, FileNotFoundException {

Scanner scanner = new Scanner(System.in);

QwenTtsRealtimeParam param = QwenTtsRealtimeParam.builder()

// To use the instruction control feature, replace the model with qwen3-tts-instruct-flash-realtime.

.model("qwen3-tts-flash-realtime")

.url("wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime")

.apikey(System.getenv("DASHSCOPE_API_KEY"))

.build();

AtomicReference<CountDownLatch> completeLatch = new AtomicReference<>(new CountDownLatch(1));

// Creates a real-time player instance.

RealtimePcmPlayer audioPlayer = new RealtimePcmPlayer(24000);

final AtomicReference<QwenTtsRealtime> qwenTtsRef = new AtomicReference<>(null);

QwenTtsRealtime qwenTtsRealtime = new QwenTtsRealtime(param, new QwenTtsRealtimeCallback() {

// File file = new File("result_24k.pcm");

// FileOutputStream fos = new FileOutputStream(file);

@Override

public void onOpen() {

System.out.println("connection opened");

System.out.println("Enter text and press Enter to send. Enter 'quit' to exit the program.");

}

@Override

public void onEvent(JsonObject message) {

String type = message.get("type").getAsString();

switch(type) {

case "session.created":

System.out.println("start session: " + message.get("session").getAsJsonObject().get("id").getAsString());

break;

case "response.audio.delta":

String recvAudioB64 = message.get("delta").getAsString();

byte[] rawAudio = Base64.getDecoder().decode(recvAudioB64);

// fos.write(rawAudio);

// Plays the audio in real time.

audioPlayer.write(recvAudioB64);

break;

case "response.done":

System.out.println("response done");

// Waits for the audio playback to complete.

try {

audioPlayer.waitForComplete();

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

// Prepares for the next input.

completeLatch.get().countDown();

break;

case "session.finished":

System.out.println("session finished");

if (qwenTtsRef.get() != null) {

System.out.println("[Metric] response: " + qwenTtsRef.get().getResponseId() +

", first audio delay: " + qwenTtsRef.get().getFirstAudioDelay() + " ms");

}

completeLatch.get().countDown();

default:

break;

}

}

@Override

public void onClose(int code, String reason) {

System.out.println("connection closed code: " + code + ", reason: " + reason);

try {

// fos.close();

// Waits for playback to complete and then shuts down the player.

audioPlayer.waitForComplete();

audioPlayer.shutdown();

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

});

qwenTtsRef.set(qwenTtsRealtime);

try {

qwenTtsRealtime.connect();

} catch (NoApiKeyException e) {

throw new RuntimeException(e);

}

QwenTtsRealtimeConfig config = QwenTtsRealtimeConfig.builder()

.voice("Cherry")

.responseFormat(QwenTtsRealtimeAudioFormat.PCM_24000HZ_MONO_16BIT)

.mode("commit")

// To use the instruction control feature, uncomment the following lines and replace the model with qwen3-tts-instruct-flash-realtime.

// .instructions("")

// .optimizeInstructions(true)

.build();

qwenTtsRealtime.updateSession(config);

// Reads user input in a loop.

while (true) {

System.out.print("Enter the text to synthesize: ");

String text = scanner.nextLine();

// If the user enters 'quit', exit the program.

if ("quit".equalsIgnoreCase(text.trim())) {

System.out.println("Closing the connection...");

qwenTtsRealtime.finish();

completeLatch.get().await();

break;

}

// If the user input is empty, skip.

if (text.trim().isEmpty()) {

continue;

}

// Re-initializes the countdown latch.

completeLatch.set(new CountDownLatch(1));

// Sends the text.

qwenTtsRealtime.appendText(text);

qwenTtsRealtime.commit();

// Waits for the current synthesis to complete.

completeLatch.get().await();

}

// Cleans up resources.

audioPlayer.waitForComplete();

audioPlayer.shutdown();

scanner.close();

System.exit(0);

}

}

1

Prepare runtime environment

Install pyaudio based on your operating system.Then, install WebSocket dependencies using pip:

- macOS

- Debian/Ubuntu

- CentOS

- Windows

Copy

brew install portaudio && pip install pyaudio

Copy

sudo apt-get install python3-pyaudio

or

pip install pyaudio

Copy

sudo yum install -y portaudio portaudio-devel && pip install pyaudio

Copy

pip install pyaudio

Copy

pip install websocket-client==1.8.0 websockets

2

Create client

Create a new Python file locally named

tts_realtime_client.py and copy the following code into the file:tts_realtime_client.py

tts_realtime_client.py

Copy

# -- coding: utf-8 --

import asyncio

import websockets

import json

import base64

import time

from typing import Optional, Callable, Dict, Any

from enum import Enum

class SessionMode(Enum):

SERVER_COMMIT = "server_commit"

COMMIT = "commit"

class TTSRealtimeClient:

"""

Client for interacting with TTS Realtime API.

This class provides methods to connect to the TTS Realtime API, send text data, receive audio output, and manage WebSocket connections.

Attributes:

base_url (str):

Base URL for the Realtime API.

api_key (str):

API Key for authentication.

voice (str):

Voice used by the server for speech synthesis.

mode (SessionMode):

Session mode, either server_commit or commit.

audio_callback (Callable[[bytes], None]):

Callback function to receive audio data.

language_type(str)

Language for synthesized speech. Options: Chinese, English, German, Italian, Portuguese, Spanish, Japanese, Korean, French, Russian, Auto

"""

def __init__(

self,

base_url: str,

api_key: str,

voice: str = "Cherry",

mode: SessionMode = SessionMode.SERVER_COMMIT,

audio_callback: Optional[Callable[[bytes], None]] = None,

language_type: str = "Auto"):

self.base_url = base_url

self.api_key = api_key

self.voice = voice

self.mode = mode

self.ws = None

self.audio_callback = audio_callback

self.language_type = language_type

# Current response status

self._current_response_id = None

self._current_item_id = None

self._is_responding = False

self._response_done_future = None

async def connect(self) -> None:

"""Establish WebSocket connection with TTS Realtime API."""

headers = {

"Authorization": f"Bearer {self.api_key}"

}

self.ws = await websockets.connect(self.base_url, additional_headers=headers)

# Set default session configuration

await self.update_session({

"mode": self.mode.value,

"voice": self.voice,

# Uncomment the lines below and replace model with qwen3-tts-instruct-flash-realtime in server_commit.py or commit.py to use instruction control

# "instructions": "Speak quickly with a noticeably rising intonation, suitable for introducing fashion products.",

# "optimize_instructions": true

"language_type": self.language_type,

"response_format": "pcm",

"sample_rate": 24000

})

async def send_event(self, event) -> None:

"""Send event to server."""

event['event_id'] = "event_" + str(int(time.time() * 1000))

print(f"Sending event: type={event['type']}, event_id={event['event_id']}")

await self.ws.send(json.dumps(event))

async def update_session(self, config: Dict[str, Any]) -> None:

"""Update session configuration."""

event = {

"type": "session.update",

"session": config

}

print("Updating session configuration: ", event)

await self.send_event(event)

async def append_text(self, text: str) -> None:

"""Send text data to API."""

event = {

"type": "input_text_buffer.append",

"text": text

}

await self.send_event(event)

async def commit_text_buffer(self) -> None:

"""Submit text buffer to trigger processing."""

event = {

"type": "input_text_buffer.commit"

}

await self.send_event(event)

async def clear_text_buffer(self) -> None:

"""Clear text buffer."""

event = {

"type": "input_text_buffer.clear"

}

await self.send_event(event)

async def finish_session(self) -> None:

"""End session."""

event = {

"type": "session.finish"

}

await self.send_event(event)

async def wait_for_response_done(self):

"""Wait for response.done event"""

if self._response_done_future:

await self._response_done_future

async def handle_messages(self) -> None:

"""Handle messages from server."""

try:

async for message in self.ws:

event = json.loads(message)

event_type = event.get("type")

if event_type != "response.audio.delta":

print(f"Received event: {event_type}")

if event_type == "error":

print("Error: ", event.get('error', {}))

continue

elif event_type == "session.created":

print("Session created, ID: ", event.get('session', {}).get('id'))

elif event_type == "session.updated":

print("Session updated, ID: ", event.get('session', {}).get('id'))

elif event_type == "input_text_buffer.committed":

print("Text buffer committed, item ID: ", event.get('item_id'))

elif event_type == "input_text_buffer.cleared":

print("Text buffer cleared")

elif event_type == "response.created":

self._current_response_id = event.get("response", {}).get("id")

self._is_responding = True

# Create new future to wait for response.done

self._response_done_future = asyncio.Future()

print("Response created, ID: ", self._current_response_id)

elif event_type == "response.output_item.added":

self._current_item_id = event.get("item", {}).get("id")

print("Output item added, ID: ", self._current_item_id)

# Handle audio delta

elif event_type == "response.audio.delta" and self.audio_callback:

audio_bytes = base64.b64decode(event.get("delta", ""))

self.audio_callback(audio_bytes)

elif event_type == "response.audio.done":

print("Audio generation completed")

elif event_type == "response.done":

self._is_responding = False

self._current_response_id = None

self._current_item_id = None

# Mark future as complete

if self._response_done_future and not self._response_done_future.done():

self._response_done_future.set_result(True)

print("Response completed")

elif event_type == "session.finished":

print("Session ended")

except websockets.exceptions.ConnectionClosed:

print("Connection closed")

except Exception as e:

print("Error handling messages: ", str(e))

async def close(self) -> None:

"""Close WebSocket connection."""

if self.ws:

await self.ws.close()

3

Select speech synthesis mode

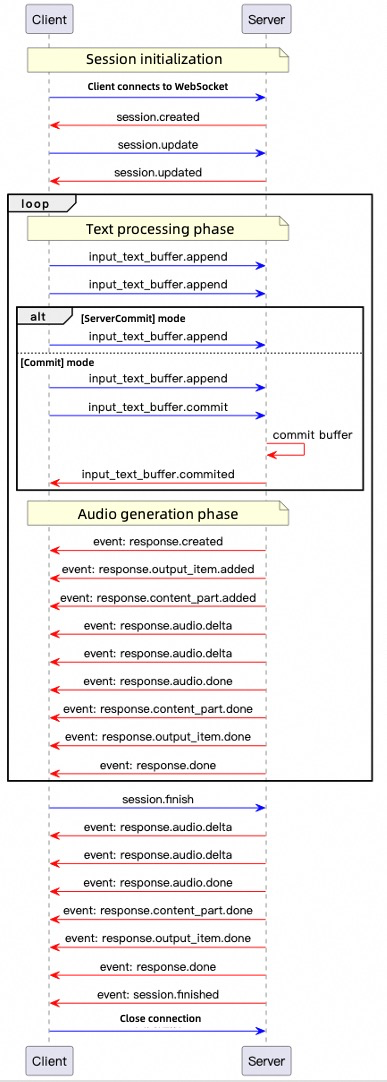

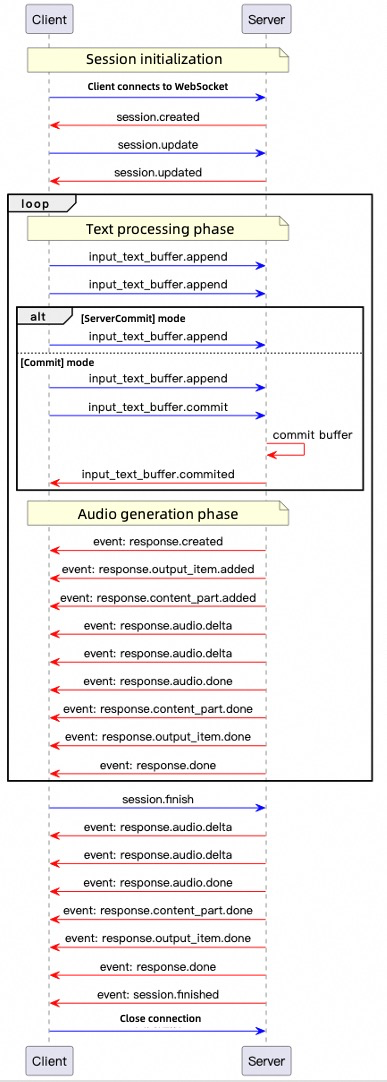

The Realtime API supports two modes:

- Server commit mode: The client sends text only. The server intelligently determines text segmentation and synthesis timing. Use this mode for low-latency scenarios without manual synthesis control, such as GPS navigation.

- Commit mode: Add text to a buffer first, then trigger the server to synthesize the specified text. Use this mode for scenarios requiring fine-grained control over pauses and sentence breaks, such as news broadcasting.

- Server commit mode

- Commit mode

Create another Python file named

Run

server_commit.py in the same directory as tts_realtime_client.py, and copy the following code into the file:server_commit.py

server_commit.py

Copy

import os

import asyncio

import logging

import wave

from tts_realtime_client import TTSRealtimeClient, SessionMode

import pyaudio

# QwenTTS service configuration

# Replace model with qwen3-tts-instruct-flash-realtime and uncomment instructions in tts_realtime_client.py to use instruction control

URL = "wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime?model=qwen3-tts-flash-realtime"

# Replace with your Qwen Cloud API Key if environment variable is not configured: API_KEY="sk-xxx"

API_KEY = os.getenv("DASHSCOPE_API_KEY")

if not API_KEY:

raise ValueError("Please set DASHSCOPE_API_KEY environment variable")

# Collect audio data

_audio_chunks = []

# Real-time playback settings

_AUDIO_SAMPLE_RATE = 24000

_audio_pyaudio = pyaudio.PyAudio()

_audio_stream = None # Will be opened at runtime

def _audio_callback(audio_bytes: bytes):

"""TTSRealtimeClient audio callback: play and cache in real time"""

global _audio_stream

if _audio_stream is not None:

try:

_audio_stream.write(audio_bytes)

except Exception as exc:

logging.error(f"PyAudio playback error: {exc}")

_audio_chunks.append(audio_bytes)

logging.info(f"Received audio chunk: {len(audio_bytes)} bytes")

def _save_audio_to_file(filename: str = "output.wav", sample_rate: int = 24000) -> bool:

"""Save collected audio data to WAV file"""

if not _audio_chunks:

logging.warning("No audio data to save")

return False

try:

audio_data = b"".join(_audio_chunks)

with wave.open(filename, 'wb') as wav_file:

wav_file.setnchannels(1) # Mono

wav_file.setsampwidth(2) # 16-bit

wav_file.setframerate(sample_rate)

wav_file.writeframes(audio_data)

logging.info(f"Audio saved to: {filename}")

return True

except Exception as exc:

logging.error(f"Failed to save audio: {exc}")

return False

async def _produce_text(client: TTSRealtimeClient):

"""Send text fragments to server"""

text_fragments = [

"Qwen Cloud is an all-in-one platform for model development and application building.",

"Both developers and business personnel can deeply participate in designing and building model applications.",

"You can develop a model application in just 5 minutes through simple UI operations,",

"or train a custom model within hours, allowing you to focus more on application innovation.",

]

logging.info("Sending text fragments…")

for text in text_fragments:

logging.info(f"Sending fragment: {text}")

await client.append_text(text)

await asyncio.sleep(0.1) # Brief delay between fragments

# Wait for server to complete internal processing before ending session

await asyncio.sleep(1.0)

await client.finish_session()

async def _run_demo():

"""Run complete demo"""

global _audio_stream

# Open PyAudio output stream

_audio_stream = _audio_pyaudio.open(

format=pyaudio.paInt16,

channels=1,

rate=_AUDIO_SAMPLE_RATE,

output=True,

frames_per_buffer=1024

)

client = TTSRealtimeClient(

base_url=URL,

api_key=API_KEY,

voice="Cherry",

mode=SessionMode.SERVER_COMMIT,

audio_callback=_audio_callback

)

# Establish connection

await client.connect()

# Execute message handling and text sending in parallel

consumer_task = asyncio.create_task(client.handle_messages())

producer_task = asyncio.create_task(_produce_text(client))

await producer_task # Wait for text sending to complete

# Wait for response.done

await client.wait_for_response_done()

# Close connection and cancel consumer task

await client.close()

consumer_task.cancel()

# Close audio stream

if _audio_stream is not None:

_audio_stream.stop_stream()

_audio_stream.close()

_audio_pyaudio.terminate()

# Save audio data

os.makedirs("outputs", exist_ok=True)

_save_audio_to_file(os.path.join("outputs", "qwen_tts_output.wav"))

def main():

"""Synchronous entry point"""

logging.basicConfig(

level=logging.INFO,

format='%(asctime)s [%(levelname)s] %(message)s',

datefmt='%Y-%m-%d %H:%M:%S'

)

logging.info("Starting QwenTTS Realtime Client demo…")

asyncio.run(_run_demo())

if __name__ == "__main__":

main()

server_commit.py to listen to real-time audio generated by the Realtime API.Create another Python file named

Run

commit.py in the same directory as tts_realtime_client.py, and copy the following code into the file:commit.py

commit.py

Copy

import os

import asyncio

import logging

import wave

from tts_realtime_client import TTSRealtimeClient, SessionMode

import pyaudio

# QwenTTS service configuration

# Replace model with qwen3-tts-instruct-flash-realtime and uncomment instructions in tts_realtime_client.py to use instruction control

URL = "wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime?model=qwen3-tts-flash-realtime"

# Replace with your Qwen Cloud API Key if environment variable is not configured: API_KEY="sk-xxx"

API_KEY = os.getenv("DASHSCOPE_API_KEY")

if not API_KEY:

raise ValueError("Please set DASHSCOPE_API_KEY environment variable")

# Collect audio data

_audio_chunks = []

_AUDIO_SAMPLE_RATE = 24000

_audio_pyaudio = pyaudio.PyAudio()

_audio_stream = None

def _audio_callback(audio_bytes: bytes):

"""TTSRealtimeClient audio callback: play and cache in real time"""

global _audio_stream

if _audio_stream is not None:

try:

_audio_stream.write(audio_bytes)

except Exception as exc:

logging.error(f"PyAudio playback error: {exc}")

_audio_chunks.append(audio_bytes)

logging.info(f"Received audio chunk: {len(audio_bytes)} bytes")

def _save_audio_to_file(filename: str = "output.wav", sample_rate: int = 24000) -> bool:

"""Save collected audio data to WAV file"""

if not _audio_chunks:

logging.warning("No audio data to save")

return False

try:

audio_data = b"".join(_audio_chunks)

with wave.open(filename, 'wb') as wav_file:

wav_file.setnchannels(1) # Mono

wav_file.setsampwidth(2) # 16-bit

wav_file.setframerate(sample_rate)

wav_file.writeframes(audio_data)

logging.info(f"Audio saved to: {filename}")

return True

except Exception as exc:

logging.error(f"Failed to save audio: {exc}")

return False

async def _user_input_loop(client: TTSRealtimeClient):

"""Continuously get user input and send text. When user enters empty text, send commit event and end current session"""

print("Enter text (press Enter directly to send commit event and end current session, press Ctrl+C or Ctrl+D to exit entire program):")

while True:

try:

user_text = input("> ")

if not user_text: # User entered empty input

# Empty input signifies end of conversation: submit buffer -> end session -> break loop

logging.info("Empty input, sending commit event and ending current session")

await client.commit_text_buffer()

# Wait briefly for server to process commit to prevent losing audio from premature session end

await asyncio.sleep(0.3)

await client.finish_session()

break # Exit user input loop directly, no need to press Enter again

else:

logging.info(f"Sending text: {user_text}")

await client.append_text(user_text)

except EOFError: # User pressed Ctrl+D

break

except KeyboardInterrupt: # User pressed Ctrl+C

break

# End session

logging.info("Ending session...")

async def _run_demo():

"""Run complete demo"""

global _audio_stream

# Open PyAudio output stream

_audio_stream = _audio_pyaudio.open(

format=pyaudio.paInt16,

channels=1,

rate=_AUDIO_SAMPLE_RATE,

output=True,

frames_per_buffer=1024

)

client = TTSRealtimeClient(

base_url=URL,

api_key=API_KEY,

voice="Cherry",

mode=SessionMode.COMMIT, # Change to COMMIT mode

audio_callback=_audio_callback

)

# Establish connection

await client.connect()

# Execute message handling and user input in parallel

consumer_task = asyncio.create_task(client.handle_messages())

producer_task = asyncio.create_task(_user_input_loop(client))

await producer_task # Wait for user input to complete

# Wait for response.done

await client.wait_for_response_done()

# Close connection and cancel consumer task

await client.close()

consumer_task.cancel()

# Close audio stream

if _audio_stream is not None:

_audio_stream.stop_stream()

_audio_stream.close()

_audio_pyaudio.terminate()

# Save audio data

os.makedirs("outputs", exist_ok=True)

_save_audio_to_file(os.path.join("outputs", "qwen_tts_output.wav"))

def main():

logging.basicConfig(

level=logging.INFO,

format='%(asctime)s [%(levelname)s] %(message)s',

datefmt='%Y-%m-%d %H:%M:%S'

)

logging.info("Starting QwenTTS Realtime Client demo…")

asyncio.run(_run_demo())

if __name__ == "__main__":

main()

commit.py to input multiple texts for synthesis. Press Enter without entering text to listen to the audio returned by the Realtime API through your speakers.The voice cloning service does not provide preview audio. Test and evaluate the effect through the speech synthesis interface. Use short text for initial testing.This example adapts the "server commit mode" code, replacing the

voice parameter with a cloned voice.- Key principle: Match the voice cloning model (

target_model) with the speech synthesis model (model). Otherwise, synthesis fails. - The example uses a local audio file

voice.mp3for voice cloning. Replace it when running the code.

- Python

- Java

Copy

# coding=utf-8

# Installation instructions for pyaudio:

# APPLE Mac OS X

# brew install portaudio

# pip install pyaudio

# Debian/Ubuntu

# sudo apt-get install python-pyaudio python3-pyaudio

# or

# pip install pyaudio

# CentOS

# sudo yum install -y portaudio portaudio-devel && pip install pyaudio

# Microsoft Windows

# python -m pip install pyaudio

import pyaudio

import os

import requests

import base64

import pathlib

import threading

import time

import dashscope # DashScope Python SDK version must be at least 1.23.9

from dashscope.audio.qwen_tts_realtime import QwenTtsRealtime, QwenTtsRealtimeCallback, AudioFormat

# ======= Constants =======

DEFAULT_TARGET_MODEL = "qwen3-tts-vc-realtime-2026-01-15" # Use the same model for voice cloning and speech synthesis

DEFAULT_PREFERRED_NAME = "guanyu"

DEFAULT_AUDIO_MIME_TYPE = "audio/mpeg"

VOICE_FILE_PATH = "voice.mp3" # Relative path to local audio file for voice cloning

TEXT_TO_SYNTHESIZE = [

'Right? I really love this kind of supermarket,',

'especially during Chinese New Year',

'when I go shopping',

'I feel',

'super super happy!',

'I want to buy so many things!'

]

def create_voice(file_path: str,

target_model: str = DEFAULT_TARGET_MODEL,

preferred_name: str = DEFAULT_PREFERRED_NAME,

audio_mime_type: str = DEFAULT_AUDIO_MIME_TYPE) -> str:

"""

Create voice and return voice parameter

"""

# Replace with your Qwen Cloud API Key if environment variable is not configured: api_key = "sk-xxx"

api_key = os.getenv("DASHSCOPE_API_KEY")

file_path_obj = pathlib.Path(file_path)

if not file_path_obj.exists():

raise FileNotFoundError(f"Audio file not found: {file_path}")

base64_str = base64.b64encode(file_path_obj.read_bytes()).decode()

data_uri = f"data:{audio_mime_type};base64,{base64_str}"

url = "https://dashscope-intl.aliyuncs.com/api/v1/services/audio/tts/customization"

payload = {

"model": "qwen-voice-enrollment", # Do not modify this value

"input": {

"action": "create",

"target_model": target_model,

"preferred_name": preferred_name,

"audio": {"data": data_uri}

}

}

headers = {

"Authorization": f"Bearer {api_key}",

"Content-Type": "application/json"

}

resp = requests.post(url, json=payload, headers=headers)

if resp.status_code != 200:

raise RuntimeError(f"Voice creation failed: {resp.status_code}, {resp.text}")

try:

return resp.json()["output"]["voice"]

except (KeyError, ValueError) as e:

raise RuntimeError(f"Failed to parse voice response: {e}")

def init_dashscope_api_key():

"""

Initialize DashScope SDK API key

"""

# Replace with your Qwen Cloud API Key if environment variable is not configured: dashscope.api_key = "sk-xxx"

dashscope.api_key = os.getenv("DASHSCOPE_API_KEY")

# ======= Callback class =======

class MyCallback(QwenTtsRealtimeCallback):

"""

Custom TTS streaming callback

"""

def __init__(self):

self.complete_event = threading.Event()

self._player = pyaudio.PyAudio()

self._stream = self._player.open(

format=pyaudio.paInt16, channels=1, rate=24000, output=True

)

def on_open(self) -> None:

print('[TTS] Connection established')

def on_close(self, close_status_code, close_msg) -> None:

self._stream.stop_stream()

self._stream.close()

self._player.terminate()

print(f'[TTS] Connection closed code={close_status_code}, msg={close_msg}')

def on_event(self, response: dict) -> None:

try:

event_type = response.get('type', '')

if event_type == 'session.created':

print(f'[TTS] Session started: {response["session"]["id"]}')

elif event_type == 'response.audio.delta':

audio_data = base64.b64decode(response['delta'])

self._stream.write(audio_data)

elif event_type == 'response.done':

print(f'[TTS] Response completed, Response ID: {qwen_tts_realtime.get_last_response_id()}')

elif event_type == 'session.finished':

print('[TTS] Session ended')

self.complete_event.set()

except Exception as e:

print(f'[Error] Error handling callback event: {e}')

def wait_for_finished(self):

self.complete_event.wait()

# ======= Main execution logic =======

if __name__ == '__main__':

init_dashscope_api_key()

print('[System] Initializing Qwen TTS Realtime ...')

callback = MyCallback()

qwen_tts_realtime = QwenTtsRealtime(

model=DEFAULT_TARGET_MODEL,

callback=callback,

url='wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime'

)

qwen_tts_realtime.connect()

qwen_tts_realtime.update_session(

voice=create_voice(VOICE_FILE_PATH), # Replace voice parameter with cloned custom voice

response_format=AudioFormat.PCM_24000HZ_MONO_16BIT,

mode='server_commit'

)

for text_chunk in TEXT_TO_SYNTHESIZE:

print(f'[Sending text]: {text_chunk}')

qwen_tts_realtime.append_text(text_chunk)

time.sleep(0.1)

qwen_tts_realtime.finish()

callback.wait_for_finished()

print(f'[Metric] session_id={qwen_tts_realtime.get_session_id()}, '

f'first_audio_delay={qwen_tts_realtime.get_first_audio_delay()}s')

You need to import the Gson dependency. If you use Maven or Gradle, add the dependency as follows:

- Maven

- Gradle

Add the following to your

pom.xml:Copy

<!-- https://mvnrepository.com/artifact/com.google.code.gson/gson -->

<dependency>

<groupId>com.google.code.gson</groupId>

<artifactId>gson</artifactId>

<version>2.13.1</version>

</dependency>

Add the following to your

build.gradle:Copy

// https://mvnrepository.com/artifact/com.google.code.gson/gson

implementation("com.google.code.gson:gson:2.13.1")

Copy

import com.alibaba.dashscope.audio.qwen_tts_realtime.*;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.google.gson.Gson;

import com.google.gson.JsonObject;

import javax.sound.sampled.*;

import java.io.*;

import java.net.HttpURLConnection;

import java.net.URL;

import java.nio.file.*;

import java.nio.charset.StandardCharsets;

import java.util.Base64;

import java.util.Queue;

import java.util.concurrent.CountDownLatch;

import java.util.concurrent.atomic.AtomicReference;

import java.util.concurrent.ConcurrentLinkedQueue;

import java.util.concurrent.atomic.AtomicBoolean;

public class Main {

// ===== Constants =====

// Use the same model for voice cloning and speech synthesis

private static final String TARGET_MODEL = "qwen3-tts-vc-realtime-2026-01-15";

private static final String PREFERRED_NAME = "guanyu";

// Relative path to local audio file for voice cloning

private static final String AUDIO_FILE = "voice.mp3";

private static final String AUDIO_MIME_TYPE = "audio/mpeg";

private static String[] textToSynthesize = {

"Right? I really love this kind of supermarket",

"especially during Chinese New Year",

"when I go shopping",

"I feel",

"super super happy!",

"I want to buy so many things!"

};

// Generate data URI

public static String toDataUrl(String filePath) throws IOException {

byte[] bytes = Files.readAllBytes(Paths.get(filePath));

String encoded = Base64.getEncoder().encodeToString(bytes);

return "data:" + AUDIO_MIME_TYPE + ";base64," + encoded;

}

// Call API to create voice

public static String createVoice() throws Exception {

// Replace with your Qwen Cloud API Key if environment variable is not configured: String apiKey = "sk-xxx"

String apiKey = System.getenv("DASHSCOPE_API_KEY");

String jsonPayload =

"{"

+ "\"model\": \"qwen-voice-enrollment\"," // Do not modify this value

+ "\"input\": {"

+ "\"action\": \"create\","

+ "\"target_model\": \"" + TARGET_MODEL + "\","

+ "\"preferred_name\": \"" + PREFERRED_NAME + "\","

+ "\"audio\": {"

+ "\"data\": \"" + toDataUrl(AUDIO_FILE) + "\""

+ "}"

+ "}"

+ "}";

HttpURLConnection con = (HttpURLConnection) new URL("https://dashscope-intl.aliyuncs.com/api/v1/services/audio/tts/customization").openConnection();

con.setRequestMethod("POST");

con.setRequestProperty("Authorization", "Bearer " + apiKey);

con.setRequestProperty("Content-Type", "application/json");

con.setDoOutput(true);

try (OutputStream os = con.getOutputStream()) {

os.write(jsonPayload.getBytes(StandardCharsets.UTF_8));

}

int status = con.getResponseCode();

System.out.println("HTTP status code: " + status);

try (BufferedReader br = new BufferedReader(

new InputStreamReader(status >= 200 && status < 300 ? con.getInputStream() : con.getErrorStream(),

StandardCharsets.UTF_8))) {

StringBuilder response = new StringBuilder();

String line;

while ((line = br.readLine()) != null) {

response.append(line);

}

System.out.println("Response content: " + response);

if (status == 200) {

JsonObject jsonObj = new Gson().fromJson(response.toString(), JsonObject.class);

return jsonObj.getAsJsonObject("output").get("voice").getAsString();

}

throw new IOException("Voice creation failed: " + status + " - " + response);

}

}

// Real-time PCM audio player class

public static class RealtimePcmPlayer {

private int sampleRate;

private SourceDataLine line;

private AudioFormat audioFormat;

private Thread decoderThread;

private Thread playerThread;

private AtomicBoolean stopped = new AtomicBoolean(false);

private Queue<String> b64AudioBuffer = new ConcurrentLinkedQueue<>();

private Queue<byte[]> RawAudioBuffer = new ConcurrentLinkedQueue<>();

// Constructor to initialize audio format and audio line

public RealtimePcmPlayer(int sampleRate) throws LineUnavailableException {

this.sampleRate = sampleRate;

this.audioFormat = new AudioFormat(this.sampleRate, 16, 1, true, false);

DataLine.Info info = new DataLine.Info(SourceDataLine.class, audioFormat);

line = (SourceDataLine) AudioSystem.getLine(info);

line.open(audioFormat);

line.start();

decoderThread = new Thread(new Runnable() {

@Override

public void run() {

while (!stopped.get()) {

String b64Audio = b64AudioBuffer.poll();

if (b64Audio != null) {

byte[] rawAudio = Base64.getDecoder().decode(b64Audio);

RawAudioBuffer.add(rawAudio);

} else {

try {

Thread.sleep(100);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}

}

});

playerThread = new Thread(new Runnable() {

@Override

public void run() {

while (!stopped.get()) {

byte[] rawAudio = RawAudioBuffer.poll();

if (rawAudio != null) {

try {

playChunk(rawAudio);

} catch (IOException e) {

throw new RuntimeException(e);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

} else {

try {

Thread.sleep(100);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}

}

});

decoderThread.start();

playerThread.start();

}

// Play an audio chunk and block until playback completes

private void playChunk(byte[] chunk) throws IOException, InterruptedException {

if (chunk == null || chunk.length == 0) return;

int bytesWritten = 0;

while (bytesWritten < chunk.length) {

bytesWritten += line.write(chunk, bytesWritten, chunk.length - bytesWritten);

}

int audioLength = chunk.length / (this.sampleRate*2/1000);

// Wait for audio in buffer to finish playing

Thread.sleep(audioLength - 10);

}

public void write(String b64Audio) {

b64AudioBuffer.add(b64Audio);

}

public void cancel() {

b64AudioBuffer.clear();

RawAudioBuffer.clear();

}

public void waitForComplete() throws InterruptedException {

while (!b64AudioBuffer.isEmpty() || !RawAudioBuffer.isEmpty()) {

Thread.sleep(100);

}

line.drain();

}

public void shutdown() throws InterruptedException {

stopped.set(true);

decoderThread.join();

playerThread.join();

if (line != null && line.isRunning()) {

line.drain();

line.close();

}

}

}

public static void main(String[] args) throws Exception {

QwenTtsRealtimeParam param = QwenTtsRealtimeParam.builder()

.model(TARGET_MODEL)

.url("wss://dashscope-intl.aliyuncs.com/api-ws/v1/realtime")

// Replace with your Qwen Cloud API Key if environment variable is not configured: .apikey("sk-xxx")

.apikey(System.getenv("DASHSCOPE_API_KEY"))

.build();

AtomicReference<CountDownLatch> completeLatch = new AtomicReference<>(new CountDownLatch(1));

final AtomicReference<QwenTtsRealtime> qwenTtsRef = new AtomicReference<>(null);

// Create real-time audio player instance

RealtimePcmPlayer audioPlayer = new RealtimePcmPlayer(24000);

QwenTtsRealtime qwenTtsRealtime = new QwenTtsRealtime(param, new QwenTtsRealtimeCallback() {

@Override

public void onOpen() {

// Handle connection established

}

@Override

public void onEvent(JsonObject message) {

String type = message.get("type").getAsString();

switch(type) {

case "session.created":

// Handle session created

break;

case "response.audio.delta":

String recvAudioB64 = message.get("delta").getAsString();

// Play audio in real time

audioPlayer.write(recvAudioB64);

break;

case "response.done":

// Handle response completed

break;

case "session.finished":

// Handle session finished

completeLatch.get().countDown();

default:

break;

}

}

@Override

public void onClose(int code, String reason) {

// Handle connection closed

}

});

qwenTtsRef.set(qwenTtsRealtime);

try {

qwenTtsRealtime.connect();

} catch (NoApiKeyException e) {

throw new RuntimeException(e);

}

QwenTtsRealtimeConfig config = QwenTtsRealtimeConfig.builder()

.voice(createVoice()) // Replace voice parameter with cloned custom voice

.responseFormat(QwenTtsRealtimeAudioFormat.PCM_24000HZ_MONO_16BIT)

.mode("server_commit")

.build();

qwenTtsRealtime.updateSession(config);

for (String text:textToSynthesize) {

qwenTtsRealtime.appendText(text);

Thread.sleep(100);

}

qwenTtsRealtime.finish();

completeLatch.get().await();

// Wait for audio playback to complete and shut down player

audioPlayer.waitForComplete();

audioPlayer.shutdown();

System.exit(0);

}

}

The voice design feature returns preview audio data. Listen to this preview audio first to confirm the effect meets your expectations before using it for speech synthesis.

1

Generate a custom voice and preview the result

If you are satisfied with the result, proceed to the next step. Otherwise, generate it again.

- Python

- Java

Copy

import requests

import base64

import os

def create_voice_and_play():

# If the environment variable is not set, replace the following line with your API key: api_key = "sk-xxx"

api_key = os.getenv("DASHSCOPE_API_KEY")

if not api_key:

print("Error: DASHSCOPE_API_KEY environment variable not found. Please set the API key first.")

return None, None, None

# Prepare request data

headers = {

"Authorization": f"Bearer {api_key}",

"Content-Type": "application/json"

}

data = {

"model": "qwen-voice-design",

"input": {

"action": "create",

"target_model": "qwen3-tts-vd-realtime-2026-01-15",

"voice_prompt": "A composed middle-aged male announcer with a deep, rich and magnetic voice, a steady speaking speed and clear articulation, is suitable for news broadcasting or documentary commentary.",

"preview_text": "Dear listeners, hello everyone. Welcome to the evening news.",

"preferred_name": "announcer",

"language": "en"

},

"parameters": {

"sample_rate": 24000,

"response_format": "wav"

}

}

url = "https://dashscope-intl.aliyuncs.com/api/v1/services/audio/tts/customization"

try:

# Send the request

response = requests.post(

url,

headers=headers,

json=data,

timeout=60 # Add a timeout setting

)

if response.status_code == 200:

result = response.json()

# Get the voice name

voice_name = result["output"]["voice"]

print(f"Voice name: {voice_name}")

# Get the preview audio data

base64_audio = result["output"]["preview_audio"]["data"]

# Decode the Base64 audio data

audio_bytes = base64.b64decode(base64_audio)

# Save the audio file locally

filename = f"{voice_name}_preview.wav"

# Write the audio data to a local file

with open(filename, 'wb') as f:

f.write(audio_bytes)

print(f"Audio saved to local file: {filename}")

print(f"File path: {os.path.abspath(filename)}")

return voice_name, audio_bytes, filename

else:

print(f"Request failed with status code: {response.status_code}")

print(f"Response content: {response.text}")

return None, None, None

except requests.exceptions.RequestException as e:

print(f"A network request error occurred: {e}")

return None, None, None

except KeyError as e:

print(f"Response data format error, missing required field: {e}")

print(f"Response content: {response.text if 'response' in locals() else 'No response'}")

return None, None, None

except Exception as e:

print(f"An unknown error occurred: {e}")

return None, None, None

if __name__ == "__main__":

print("Starting to create voice...")

voice_name, audio_data, saved_filename = create_voice_and_play()

if voice_name:

print(f"\nSuccessfully created voice '{voice_name}'")

print(f"Audio file saved as: '{saved_filename}'")

print(f"File size: {os.path.getsize(saved_filename)} bytes")

else:

print("\nVoice creation failed")

You need to import the Gson dependency. If you are using Maven or Gradle, add the dependency as follows:

- Maven

- Gradle

Add the following content to

pom.xml:Copy

<!-- https://mvnrepository.com/artifact/com.google.code.gson/gson -->

<dependency>

<groupId>com.google.code.gson</groupId>

<artifactId>gson</artifactId>

<version>2.13.1</version>

</dependency>

Add the following content to

build.gradle:Copy

// https://mvnrepository.com/artifact/com.google.code.gson/gson

implementation("com.google.code.gson:gson:2.13.1")

Copy

import com.google.gson.JsonObject;

import com.google.gson.JsonParser;

import java.io.*;

import java.net.HttpURLConnection;

import java.net.URL;

import java.util.Base64;

public class Main {

public static void main(String[] args) {

Main example = new Main();

example.createVoice();

}

public void createVoice() {

// If the environment variable is not set, replace the following line with your API key: String apiKey = "sk-xxx"

String apiKey = System.getenv("DASHSCOPE_API_KEY");

// Create the JSON request body string

String jsonBody = "{\n" +

" \"model\": \"qwen-voice-design\",\n" +

" \"input\": {\n" +

" \"action\": \"create\",\n" +

" \"target_model\": \"qwen3-tts-vd-realtime-2026-01-15\",\n" +

" \"voice_prompt\": \"A composed middle-aged male announcer with a deep, rich and magnetic voice, a steady speaking speed and clear articulation, is suitable for news broadcasting or documentary commentary.\",\n" +

" \"preview_text\": \"Dear listeners, hello everyone. Welcome to the evening news.\",\n" +

" \"preferred_name\": \"announcer\",\n" +

" \"language\": \"en\"\n" +

" },\n" +

" \"parameters\": {\n" +

" \"sample_rate\": 24000,\n" +

" \"response_format\": \"wav\"\n" +

" }\n" +

"}";

HttpURLConnection connection = null;

try {

URL url = new URL("https://dashscope-intl.aliyuncs.com/api/v1/services/audio/tts/customization");

connection = (HttpURLConnection) url.openConnection();

// Set the request method and headers

connection.setRequestMethod("POST");

connection.setRequestProperty("Authorization", "Bearer " + apiKey);

connection.setRequestProperty("Content-Type", "application/json");

connection.setDoOutput(true);

connection.setDoInput(true);

// Send the request body

try (OutputStream os = connection.getOutputStream()) {

byte[] input = jsonBody.getBytes("UTF-8");

os.write(input, 0, input.length);

os.flush();

}

// Get the response

int responseCode = connection.getResponseCode();

if (responseCode == HttpURLConnection.HTTP_OK) {

// Read the response content

StringBuilder response = new StringBuilder();

try (BufferedReader br = new BufferedReader(

new InputStreamReader(connection.getInputStream(), "UTF-8"))) {

String responseLine;

while ((responseLine = br.readLine()) != null) {

response.append(responseLine.trim());

}

}

// Parse the JSON response

JsonObject jsonResponse = JsonParser.parseString(response.toString()).getAsJsonObject();

JsonObject outputObj = jsonResponse.getAsJsonObject("output");

JsonObject previewAudioObj = outputObj.getAsJsonObject("preview_audio");

// Get the voice name

String voiceName = outputObj.get("voice").getAsString();

System.out.println("Voice name: " + voiceName);

// Get the Base64-encoded audio data

String base64Audio = previewAudioObj.get("data").getAsString();

// Decode the Base64 audio data

byte[] audioBytes = Base64.getDecoder().decode(base64Audio);

// Save the audio to a local file

String filename = voiceName + "_preview.wav";

saveAudioToFile(audioBytes, filename);

System.out.println("Audio saved to local file: " + filename);

} else {

// Read the error response

StringBuilder errorResponse = new StringBuilder();

try (BufferedReader br = new BufferedReader(

new InputStreamReader(connection.getErrorStream(), "UTF-8"))) {

String responseLine;

while ((responseLine = br.readLine()) != null) {

errorResponse.append(responseLine.trim());

}

}

System.out.println("Request failed with status code: " + responseCode);